When Will Voice AI Cross the Chasm?

Adopters, Use Cases, and Blockers

Voice AI is becoming an increasingly prevalent technology, with the global market projected to grow from $12 billion in 2022 to nearly $50 billion by 2029 (Statista, 2024). While tech giants like OpenAI, Google, and Microsoft have integrated voice interfaces into their AI offerings, older agents like Siri dominate usage, and adoption remains fragmented. We conducted research on early adopters of Voice AI - who’s using it, how they’re using it, and what’s holding it back from going mainstream. While Voice AI has carved out a role in hands-free convenience and smart home control, it struggles with natural interactions, accuracy issues, privacy concerns, and a lack of straightforward, everyday utility.

A Lesson from the First Wave of Wearable AI

The first wave of wearable AI is a reminder of what happens when technology feels more like a gimmick than something people rely on. Many of these devices were slow, awkward, or just didn’t solve real problems. The Rabbit R1 fell short in usability - users were frustrated by slow responses, limited features, and reliance on a separate web interface for settings. A WIRED 2024 review said, “I have found the Rabbit R1 fairly useless over the past week I've been testing it…the biggest issue I have…is finding a use for it. Not only do I now have to carry a second device everywhere I go, but more often than not, I end up pulling out my phone to finish the task the R1 can't complete. This red-orange gadget isn't a personal assistant, it's dead weight.”

Similarly, the Humane AI Pin was widely criticised for its slow, awkward, and unreliable interactions, leading to over $1 million in product returns (The Verge, 2024). For Voice AI to take off, it must avoid the same pitfalls - focusing less on hype and more on delivering intuitive and genuinely valuable experiences that fit into people’s lives and work.

Adopters

We found that Voice AI adopters tend to fall into these categories:

- Frequent Users: Those who integrate voice assistants like Siri, Alexa, or ChatGPT into their daily routines, often using them for efficiency and convenience.

- Tech Enthusiasts: People who actively experiment with Voice AI across multiple devices and applications.

- Situational Users: Those who turn to Voice AI in specific scenarios, like hands-free control while driving or multitasking.

- Industrial Users: Hands-free control, input, response, and guidance (see below).

Consumer Use Cases

Participants in a recent survey we conducted highlighted the most common use cases for Voice AI:

- Hands-Free Convenience: 4 out of 5 users rely on Voice AI when they cannot use their hands, such as while driving or cooking

- Information Retrieval: Nearly 40% of respondents utilise Voice AI for entertainment, such as music, podcasts, language learning, and general exploration of topics.

- In-Car Navigation: 25% of users use Voice AI for in-car navigation. The automotive industry is one of the fastest-growing markets for Voice AI, as hands-free interactions make driving safer and more convenient. McKinsey predicts that voice-enabled AI assistants will become standard in connected vehicles by 2030, with a shift towards proactive suggestions and real-time driver assistance (McKinsey, 2023). One user in our early testing summed it up: "It just makes things easier - especially when I’m driving."

- Smart Home Integration: Roughly one in four users employs Voice AI to control smart devices such as lights, thermostats, and security systems.

Wearables: While early wearable AI devices like Rabbit R1 and the Humane AI Pin struggled (The Times, 2024; WIRED, 2024), as mentioned earlier, Meta’s smart glasses and fitness wearables like Whoop are keeping the wearable category alive (The Verge, 2024). The next step is adding natural voice control and interaction to these devices with limited input capabilities. Meta's smart glasses, for example, have been updated to include voice AI features, aiming to enhance the user experience (The Verge, 2024).

Industrial Use Cases

In industrial settings, Voice AI will improve worker efficiency by enabling hands-free data entry and instructions, enhance safety by minimising distractions, and streamline communication processes through voice commands. This will lead to increased productivity and accuracy across the manufacturing chain. Key applications include managing inventory, scheduling maintenance, conducting quality control checks, and providing real-time operational updates.

Key benefits:

- Hands-free operation: Workers can issue commands and access information verbally, reducing the need to input data on keyboards or touch screens manually. This can be especially beneficial when hands are occupied or when wearing gloves.

- Improved accuracy: Voice AI systems can validate commands and flag inconsistencies in real time, minimising errors caused by fatigue or distractions.

- Enhanced safety: Voice AI can reduce the risk of accidents associated with operating machinery while looking at screens by enabling hands-free interaction.

Specific use cases of voice AI in industrial settings:

- Inventory management

- Machine operation

- Maintenance scheduling

- Dictation and Data Entry

- Training and guidance

- Real-time instruction and support during complex tasks

A key challenge to consider is noisy environments: Ensuring accurate voice recognition and low latency response times in loud industrial settings with side talk and other distractions.

The Shift from Commands to More Natural Interactions

Even the most engaged Voice AI users still default to practical, task-based applications such as setting reminders, scheduling, and managing to-do lists. But expectations are evolving. Users now expect AI to be more than just reactive; they want it to anticipate needs, offer recommendations, and fit into their daily routines. Early testers of the SMPL voice app (Elyce) expressed interest in AI that could check in on them (“How was your day?”) or make intelligent recommendations based on their personality and preferences. Beyond personalisation, users also expect deeper integrations across their apps and devices. A participant in our testing put it simply: “If it could interact with my calendar - remind me about calls, book a restaurant, or structure my day - that would be cool.”

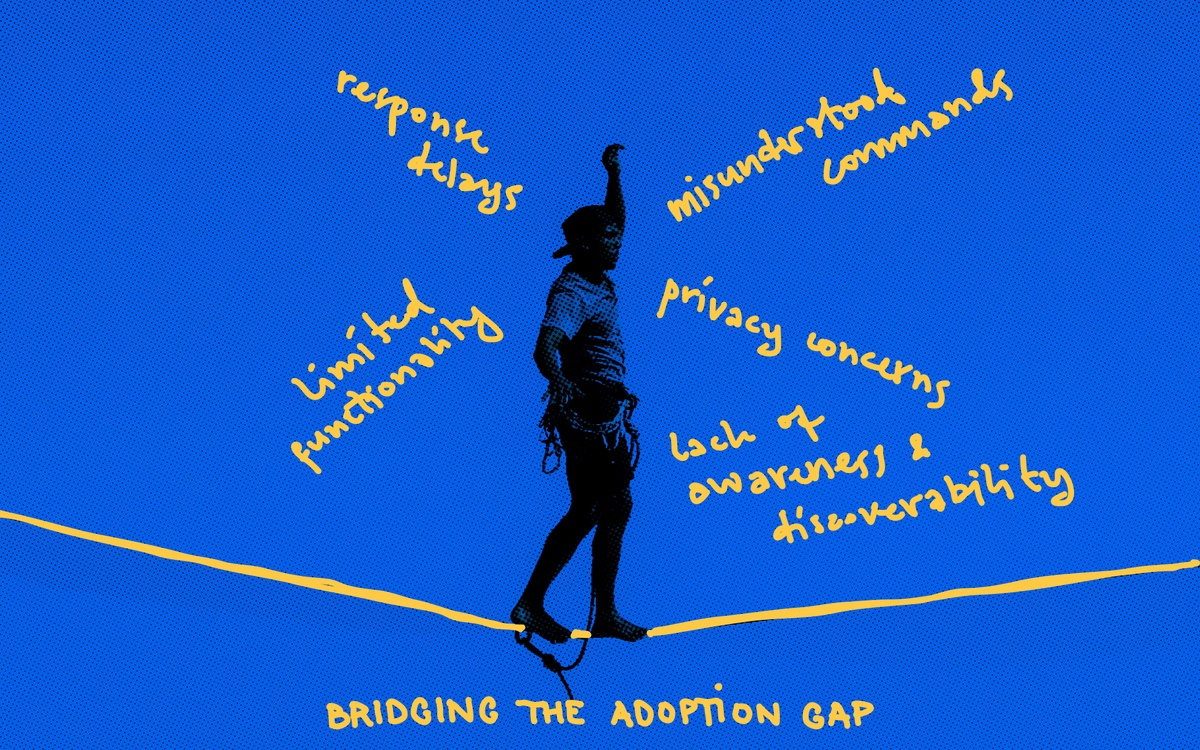

Blockers to Adoption

Despite its potential, several key barriers prevent Voice AI from reaching mass adoption:

Quality & Reliability

Voice AI’s effectiveness varies depending on several factors, according to our survey:

- Speech Recognition Errors: Over 80% of respondents reported that misunderstood commands remain a common frustration.

- Response Delays: About 2 out of 5 users share that slow responses lead users to repeat themselves, creating confusion.

- Environmental Challenges: Approximately ⅓ of respondents find that background noise, multiple speakers, and weak network connections often degrade Voice AI performance.

- Limited Functionality: About a quarter of users report Voice AI’s limited functionality preventing them from completing tasks.

- Lack of interruptibility: 1 out of 5 Voice AI users share that some systems struggle to recognise when users want to interrupt the AI mid-response.

Discoverability & Awareness

A significant challenge with Voice AI is that users often remain unaware of its presence within their apps. Even frequent AI users often remain unaware of voice functionalities embedded in apps like ChatGPT's advanced voice mode or WhatsApp's live mode. For instance, ChatGPT has recently enhanced its integration with WhatsApp, allowing users to send voice messages and images to interact with the chatbot. However, these features may go unnoticed by many users (Tom’s Guide, 2024). If users are unaware of these features, they cannot utilise them.

Our survey found that traditional Voice AI assistants dominate new entrants in adoption, with nearly 70% of respondents using Siri. Other assistants, like Alexa (56%) and Google Assistant (47%), were also commonly reported to be used by the respondents. Recent AI entrants like ChatGPT (34%) and Gemini (18%) showed a lower usage rate. Despite the dominance of these traditional assistants, awareness of newer voice-enabled experiences remains low, limiting their adoption.

Beyond that, even when users find Voice AI, they often wonder how to interact. Early testers of Elyce shared: “I don’t know how best to use this.” and “I don’t think people will instinctively know how to interact with it unless they’re already familiar with voice AI.” This lack of prompts, onboarding, and clear UI signals left new users frustrated and disengaged. For Voice AI to gain mainstream adoption, it must be visible and intuitive. The best AI experiences don’t just sit there waiting for users to guess what they can do - they guide them. Simple, proactive nudges like “I can also send you this response as a document” or “Want me to summarise this conversation?” can make all the difference. Without surfacing these capabilities clearly, even the most advanced Voice AI risks becoming invisible.

Privacy Concerns

Some of our survey respondents expressed hesitation about using Voice AI due to privacy issues, including:

- Half of the users reported concerns about devices always listening

- About ⅓ of respondents share a fear of conversations being recorded or stored

- Over 1 in 10 users worry about personal data ownership and usage

Our early testing echoed this discomfort, where some users found AI listening 'freaky' or 'creepy'. All participants struggled with understanding when our app was actively listening or processing. Users need to feel in control of their data and trust that Voice AI only listens when intended. For Voice AI to gain trust, companies must communicate privacy measures and give users complete control over their data. Addressing these concerns with more precise UX signals - such as explicit indicators for active listening, encryption policies, and data retention practices - can help mitigate privacy fears.

Business Models

Voice AI providers charge customers for the volume of API calls, offer tiered pricing plans based on usage levels, and provide custom development services for specific needs. These models focus on delivering high-quality speech recognition and text-to-speech capabilities with features like language support, voice customization, and integration flexibility.

Third party Voice AI developers want simple and reliable deployment options and well-documented and supported cloud APIs that are highly configurable for their specific interactions. The leading services will have best-in-class LLM performance and voice response infrastructure that “just works” across all devices (iOS, Android, IoT, etc.).

Summary & Future Outlook

Our research suggests that while Voice AI adoption is steadily growing, its long-term success depends on overcoming key barriers. To cross the chasm, Voice AI must:

- Improve accuracy & reliability - mainly acoustic handling and noisy environments

- Enhance user experience and discoverability—so people know what Voice AI can do

- Move beyond basic commands - toward real, seamless integrations

- Build trust through transparency - so users feel safe using the technology

Beyond consumer use, Voice AI is also expanding into industrial settings - manufacturing, logistics, and robotics - aiming to enhance efficiency, safety, and automation. In manufacturing, workers use voice commands to control machines hands-free to reduce errors and downtime (Springer, 2023). Logistics firms leverage Voice AI for fleet coordination and warehouse operations, helping improve speed and accuracy (Logistics IT, 2024). Robotics companies also integrate natural language interfaces for smoother human-machine collaboration, as seen in Apptronik’s Apollo, designed for warehouses and manufacturing plants (Reuters, 2025). As Voice AI advances in accuracy, acoustic handling, and user experience, its role in streamlining industrial operations is expected to grow.

If the challenges outlined in this article are addressed, Voice AI has the potential to become an indispensable, intelligent assistant - one that people actively rely on every day. The future of Voice AI isn’t just about talking to machines - it’s about fostering natural, intuitive interactions that seamlessly fit into our lives.

—---------------------

About SMPL

Synthetic Media Processing Laboratory (SMPL) was founded in 2021 by audio pioneers from Skype, Microsoft, Amazon, and BlackBerry to bring efficient, natural audio to the world through a unique blend of deep machine learning and traditional digital signal processing.

SMPL’s technology powers billions of RTC minutes daily for over four billion users worldwide. But that was yesterday.

Today, SMPL is leading the next wave of innovation in natural, conversational Voice AI—breaking new ground in real-time audio and defining the future of human-machine interaction.

Voice AI isn’t just another RTC use case—it opens the door for a generational leap in audio technology. Machine listening and speech enable new interaction models unbound by human hearing and speaking constraints. SMPL is pioneering these advancements, introducing capabilities that will redefine RTC performance and unlock the full potential of Voice AI.