Siri’s Struggles & Apple’s AI Reboot

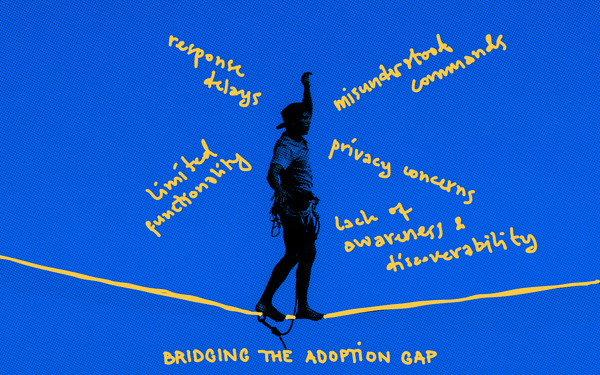

Siri is slow, dumb, and unhelpful because Apple bet on on-device processing; fell behind in LLM research, and built it on a fragile, outdated system. They’re trying to catch up, but competitors are already moving to the next level. It looks grim.

However, Apple is awake, has recently invested billions in AI, and is reportedly working on its LLM (Ajax).

They need to move faster, but Apple’s AI progress is tied to its hardware releases, meaning innovation happens at the iPhone/iOS release cadence, which is too slow. Will they rebuild Siri from scratch? License AI models from OpenAI/Google (which would be embarrassing for them). Sacrifice privacy to compete with a cloud-first approach?

OpenAI, Google, and Meta have huge research teams constantly pushing state-of-the-art LLMs. Siri was built on pre-LLM tech; it’s a static voice assistant with simple rule-based commands, not real AI. Additionally, there has been no serious innovation in traditional speech recognition and natural language processing approaches for years while competitors switched tracks and moved to LLMs.

Apple prioritized on-device processing for privacy and because they are inherently all about the device. But running AI on-device means using smaller models with fewer parameters → worse accuracy. Apple has been stuck with old-school, rule-based AI for too long. The competitors’ cloud-based models are constantly updated and have totally eclipsed Siri's capabilities.

So, why am I still betting on Apple?

Despite this, Apple still has the most popular voice assistant on the market. Our recent survey showed that 72% of Voice AI users use Siri, far outpacing anyone else. This is proof that tight integration with their platform provides a massive advantage and protective moat—but they can’t lag forever.

The Long Game

Apple holds another ace. They own the wake-word, screen awareness, and many of the most important apps on iOS giving them the ability to make Siri agentic to a degree that third parties cannot.

Again, I’m not worried about Siri. In competitive strategy, there is the “fast follower” concept. When an upstart innovates and grabs the market adjacent to a larger leader, that leader reacts with a fast copy of the product or feature. Think TikTok and Instagram Reels. Microsoft proved over and over again that if you are large enough, you don’t even need to be fast. They perfected the “slow follower” approach, investing steadily and slowly bludgeoning the competition to death. Apple can afford that kind of patience, too.

Hybrid AI & Apple’s Hardware Superiority

AI agents will not be on-device or cloud-based; they will be both. The winning path is a hybrid AI system that leverages both on-device processing and cloud-based models.

Apple has the largest ecosystem and the most potent on-device hardware for hybrid AI (A-series and M-series chips with Neural Engines). In the hybrid model, on-device AI handles low-latency, privacy-sensitive tasks (e.g., detecting wake words, filtering background noise, and running local VAD/AEC). Cloud AI handles complex reasoning, large-scale LLM inference, and deep personalization that require more computing power.

At SMPL, we have proven that the split system works much better than device-only or cloud-only for Voice AI. The device preprocesses audio input while the cloud does heavy lifting. Specifically, the device eliminates echo and background noise (AEC, noise suppression), detects when the user speaks (VAD), detects interruptions, barge-ins, and user intent shifts, and manages turn-taking without sending everything to the cloud. It’s faster, more accurate, and more natural.

In Apple's case, they process simple commands locally and instantly (e.g., setting a timer, controlling music). At the same time, cloud AI will handle complex conversational reasoning (multi-turn interactions, generative AI), retrieve knowledge beyond what can be stored on the device, and understand user history, preferences, and long-term memory to personalize responses.

This reduces latency (since time-sensitive processing happens locally), preserves privacy (not all data is sent to the cloud), and lowers compute costs (less server draw).

This cooperative system design is not limited to voice; it will extend beyond voice to almost every other AI-powered application.

Hybrid AI in Action:

- Keyboard AI (on-device autocorrect, voice typing improvements, and real-time translation).

- On-device writing assistance, smart replies, and summarization without sending data to the cloud.

- Image processing & generation (e.g., AI-enhanced Photos, emojis, and visual search) will run locally when possible.

- Device AI instantly adapts interactions, notifications, and UI elements based on personal usage patterns and will understand user intent across apps without cloud processing (e.g., automatically suggesting app actions based on routine).

- Computational photography has always been a core focus. On-device AI will enhance photo editing, video stabilization, live adjustments, and AI-powered real-time video enhancements like background blurring, lighting correction, and object removal (beyond FaceTime).

- Apple Vision Pro and iPhones will use AI-driven real-time scene analysis to detect objects, perform 3D mapping, and create AR overlays.

- Siri 2.0 will use on-device AI for faster, context-aware, and more natural interactions (compared to cloud-based approaches). Live translation & real-world interaction (like understanding menus, signs, or hand gestures).

- Developers will also access on-device AI through Apple’s frameworks (e.g., CoreML and Neural Engine). Device AI will allow third-party apps to leverage ML without requiring cloud APIs, enhancing gaming, finance, health tracking, and automation.

There is continued interest in a totally on-device AI - so the trend will continue to be towards the device, not away from it.

Final Thought: Hybrid AI is the Future, and Apple is in the Lead.

Like Apple, SMPL is betting on the hybrid model for Voice AI. Our implementations show remarkable improvements over what is currently available from the (cloud-only) tech giants (check out our demos at simplertc.com).

Apple is uniquely positioned for hybrid AI and the coming wave of Voice AI agents. But what about the rest of the industry?

Google, Samsung, Xiaomi, OnePlus, Qualcomm: You must solve this problem for your customers and the Android ecosystem. We can help. Our lightweight, on-device acoustic solution makes Android’s Voice AI fast, responsive, and more natural than iOS without new hardware.

—---------------------

About SMPL

Synthetic Media Processing Laboratory (SMPL) was founded in 2021 by audio pioneers from Skype, Microsoft, Amazon, and BlackBerry to bring efficient, natural audio to the world through a unique blend of deep machine learning and traditional digital signal processing.

SMPL’s technology powers billions of RTC minutes daily for over four billion users worldwide. But that was yesterday.

Today, SMPL is leading the next wave of innovation in natural, conversational Voice AI—breaking new ground in real-time audio and defining the future of human-machine interaction.

Voice AI isn’t just another RTC use case—it opens the door for a generational leap in audio technology. Machine listening and speech enable new interaction models unbound by human hearing and speaking constraints. SMPL is pioneering these advancements, introducing capabilities that will redefine RTC performance and unlock the full potential of Voice AI.